Chapter Content

Expand All

CHAPTER LESSONS – FREE

Lesson Content

0% Complete

0/7 Steps

Lesson Content

0% Complete

0/9 Steps

CHAPTER LESSONS – PREMIUM

Lesson Content

0% Complete

0/3 Steps

Lesson Content

0% Complete

0/3 Steps

Lesson Content

0% Complete

0/10 Steps

Lesson Content

0% Complete

0/11 Steps

PAST EXAMS – PREMIUM

2021 Winter Final

8 Topics

Expand

2021 Summer Final

10 Topics

Expand

Lesson Content

0% Complete

0/10 Steps

2020 Fall Final

10 Topics

Expand

Lesson Content

0% Complete

0/10 Steps

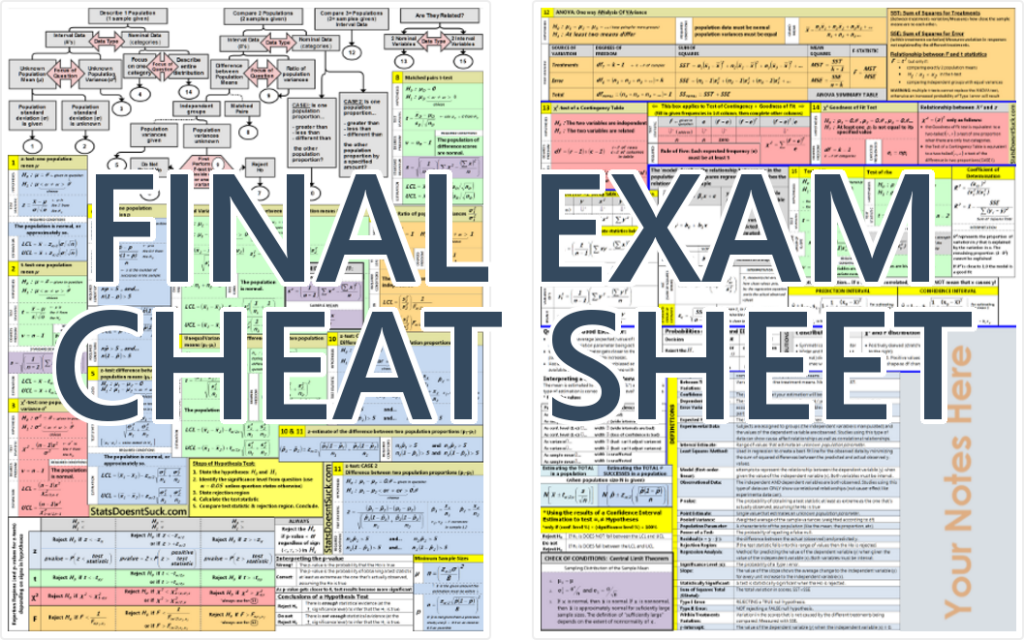

CHEAT SHEET – PREMIUM